MULTI-SENSOR FUSION

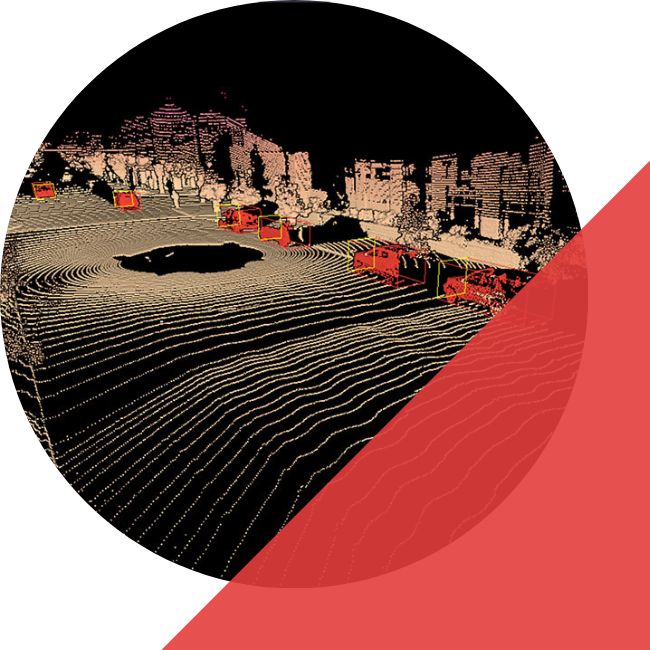

High-quality and Scalable Multi-Sensor Fusion Data Annotation for Autonomous Mobility.

SENSOR FUSION

FOR 3D PERCEPTION

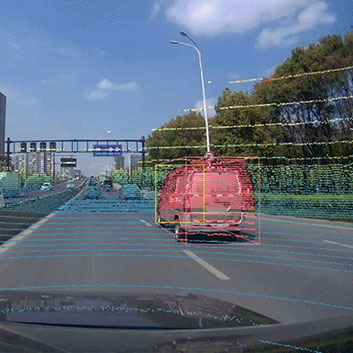

- Multi-sensor Fusion: At iMerit, we excel at multi-sensor annotation for the camera, LiDAR, radar, and audio data for enhanced scene perception, localization, mapping, and trajectory optimization.

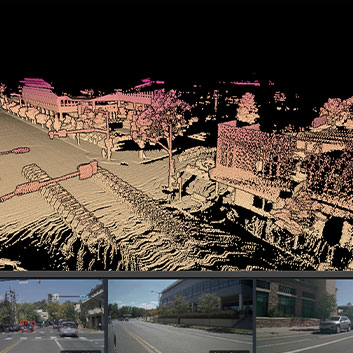

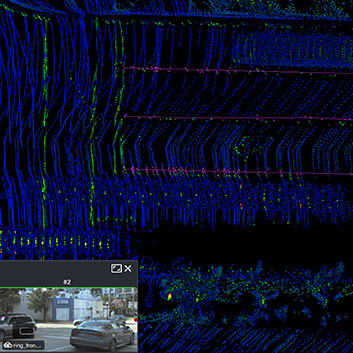

- Ground-Truth Accuracy: Our teams use 3D data points with additional RGB or intensity values to analyze imagery within the frame to ensure that annotations have the highest ground-truth accuracy.

- Merged point cloud: Merged point cloud unifies all coodrinates into single frame and eliminate manual frame traversal, offering a holistic view of object sequences.

- API’s and Automation: Our tooling ecosystem supports automation in annotation and validation, workflow customization, API integration, easy visualization, and cost-effective labeling.

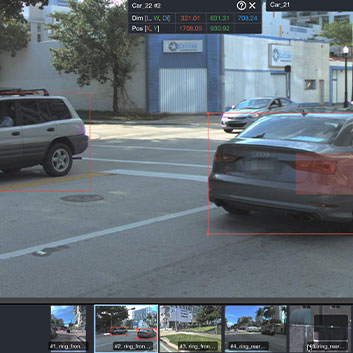

- Annotation types: Multi-sensor annotations include 2D/ 3D linking, 2D/ 3D bounding boxes, and 3D point cloud segmentation.

MODALITIES WE SUPPORT

2D IMAGES

2D VIDEO

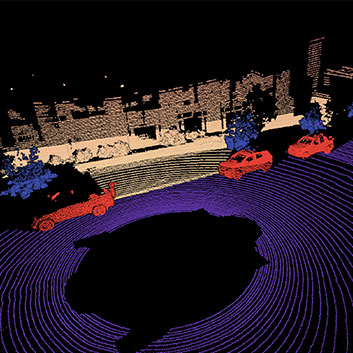

3D Point cloud

RADAR

AUDIO

THERMAL AND INFRARED

CAPABILITIES

3D SEGMENTATION

Object tracking

3D Point Cloud

3D Bounding boxes

3D Polygon Annotation

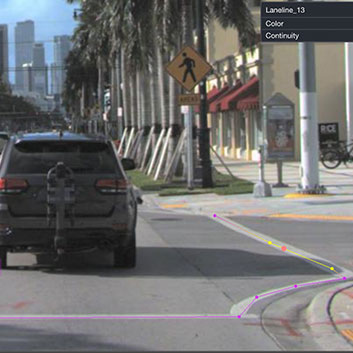

3D POLYLINE ANNOTATION

Polylines for lanes, curbs, road edges, and boundaries—used in mapping, localization, and planning constraints.

2D Bounding boxes

2D Polygon annotation

2D polyline annotation

3D Multi-sensor Labeling Tool

Case Studies

We partnered with this company to support them with data annotation across 2D images and 3D point clouds. 3D perception systems are highly dependent on data quality for improved performance, and the company was looking at target identification in LiDAR frames with lane marking, road boundaries, traffic lights, and others.

With our human-in-the-loop workflows, data labeling on 3D LiDAR frames for poles, pedestrians, signs, cars, and barriers, was achieved seamlessly and accurately.

Achieving Faster and Accurate

Object Detection for Autonomous Mobility

Rapid Scalability

All-sensors Support

High-quality

Tool Ecosystem

Custom Engineering

Security

Trusted & secure

At iMerit, data security and privacy are built into every workflow. Our platform features strict access controls, granular data partitioning, and encryption for sensitive information, and complies with data privacy regulations and compliance standards. Detailed logging, monitoring, and audit trails ensure complete transparency and traceability, keeping every interaction with your data secure and accountable.

GETTING

STARTED!

Make sensor fusion training data reliable at scale. iMerit delivers consistent 2D and 3D annotations across synchronized sensor streams with secure workflows and measurable QA.