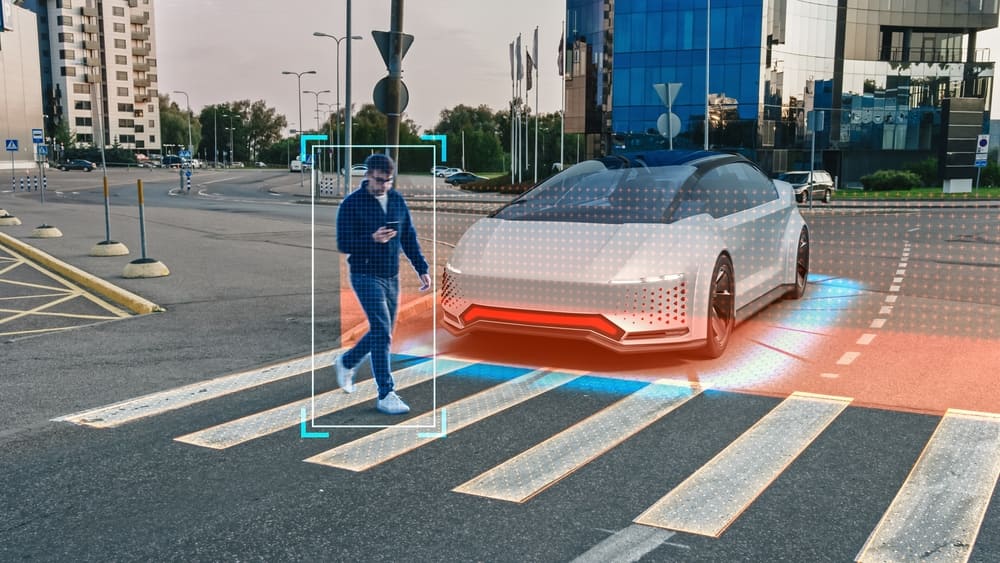

The sensor fusion market continues to grow as autonomous vehicle (AV) programs worldwide demand richer, more reliable perception systems. Modern driverless vehicles combine onboard cameras, LiDAR sensors, and millimeter-wave radars to capture real-time environmental data, monitor changes in their surroundings, and make informed driving decisions. Each sensor contributes something unique: cameras provide dense semantic information but lack precise depth; LiDAR delivers accurate 3D spatial data but with sparse resolution; and radar maintains stable performance in adverse weather conditions where optical sensors struggle.

Integrating and labeling the data that flows from these sensors, however, remains one of the most demanding challenges in AV development. As fusion architectures evolve and datasets grow, annotation teams have to keep pace with increasing complexity while maintaining the ground-truth accuracy that safety-critical models require.

7 Challenges in LiDAR-Camera-Radar Fusion Data Labeling

With multi-sensor fusion established as the preferred perception approach for autonomous mobility, the paradigm of data annotation has shifted dramatically. Traditional workflows that handled 2D images and 3D point clouds separately have given way to integrated 2D-3D sensor fusion annotation, introducing a new set of data challenges.

Scaling LiDAR-Camera Fusion Across Huge 3D Datasets

AV sensor suites generate enormous volumes of data per driving hour. A single vehicle equipped with multiple LiDAR units, cameras, and radars can produce terabytes of raw recordings in a single day of testing. Efficiently processing, storing, organizing, and labeling this data requires robust infrastructure and well-designed annotation pipelines. Without scalable data management systems, teams risk bottlenecks that delay model training and slow iteration cycles.

As next-generation LiDAR units push to 128 channels and beyond, point cloud density increases further, compounding the data volume challenge. Datasets like DurLAR, which capture 2048×128 panoramic images from a 128-channel LiDAR, illustrate the trajectory of increasing data richness that labeling operations must accommodate.

Reducing Labeling Time with Automation and Pre-Labeling

Creating ground truth for multi-sensor fusion models is inherently time-consuming. Annotators must label video frame by frame, align 3D cuboids with point cloud data, and verify consistency across sensor views. This labor-intensive process is essential for training machine learning-based detectors and evaluating the performance of existing detection algorithms.

Pre-labeling with AI-assisted detection models can accelerate throughput, but the resulting labels still require careful human review, especially in safety-critical domains where annotation errors can propagate into dangerous model behavior. The most effective workflows combine automated pre-annotation with structured human verification stages to reduce time-per-frame without sacrificing quality.

Managing Cross-Sensor Calibration, Occlusions, and Temporal Consistency

Each sensor in a fusion stack has unique characteristics, fields of view, and coordinate systems. Projecting LiDAR point clouds into camera coordinate frames (and vice versa) requires precise extrinsic and intrinsic calibration. Even small calibration errors produce misaligned annotations that degrade model performance. Beyond calibration, annotators have to handle occlusions, where an object visible in one sensor modality is blocked or partially hidden in another.

Temporal synchronization adds another layer of complexity: sensor data captured at slightly different timestamps must be aligned so that moving objects appear in consistent positions across modalities. Managing these factors at scale demands both specialized tooling and trained annotators who understand cross-sensor geometry.

Ensuring Consistency and Ground-Truth Quality

Maintaining consistent, high-quality annotations across a large multi-sensor dataset is a complex endeavor. As the number of annotators and the size of the dataset grow, so does the risk of label drift, where subtle inconsistencies accumulate over time. Effective quality control requires standardized labeling guidelines, multi-stage review processes, and real-time performance monitoring.

Research on LiDAR-camera fusion architectures, such as the feature-layer fusion strategies evaluated on the KITTI benchmark, has shown that even modest improvements in ground-truth quality translate directly into measurable gains in detection accuracy at easy, moderate, and hard difficulty levels.

Limits of Automation in Complex 3D Fusion Scenarios

Automated labeling methods have made significant progress in recent years, but they still offer limited flexibility when dealing with the intricacies of multi-modal sensor data. A model trained to auto-label objects in camera images may struggle with sparse LiDAR returns at long range, and vice versa.

Fusion-specific challenges, such as reconciling conflicting detections across modalities or labeling partially observed objects that appear in only one sensor stream, require human judgment that current automation can’t fully replicate. The most practical approach treats automation as an accelerator rather than a replacement for human expertise, reserving complex cases for domain-trained annotators.

Capturing Edge Cases with Real and Synthetic Multimodal Data

Edge cases, those rare and complex scenarios that standard data collection may not capture, represent some of the highest-risk situations for autonomous vehicles. Construction zones, emergency vehicles, unusual weather conditions, and unexpected pedestrian behavior all fall into this category. Real-world data collection alone often can’t provide sufficient coverage of these long-tail scenarios.

Synthetic data generation offers a powerful complement, enabling teams to systematically create multimodal training examples for conditions that are dangerous or impractical to capture on public roads. Datasets like SEVD built in the CARLA simulator demonstrate how synthetic pipelines can produce event-camera, RGB, depth, and segmentation data with perfect ground truth across controlled environmental conditions.

Similarly, the Adver-City dataset recreates adverse weather scenarios including fog, heavy rain, and blinding glare for collaborative perception testing. Integrating synthetic data into the labeling pipeline, and validating it against real-world distributions, allows AV teams to strengthen model robustness without relying solely on costly physical data collection.

Adapting Workflows to New LiDAR-Camera Fusion Architectures

The sensor fusion landscape evolves rapidly. New fusion architectures, from early and late fusion strategies to deep fusion models like DeepFusion and cross-view spatial feature approaches like 3D-CVF, demand different annotation formats and labeling conventions. The emergence of 4D radar as a complementary modality as seen in datasets like V2X-Radar adds yet another data stream that labeling teams must support. As researchers and AV companies adopt transformer-based and Bird’s Eye View (BEV) fusion models, annotation requirements shift accordingly.

Labeling operations need to be flexible enough to adapt to these changes without rebuilding workflows from scratch, requiring modular pipeline design and close collaboration between annotation teams and perception engineers.

How iMerit Solves 3D Sensor Fusion Labeling for AVs

iMerit brings over a decade of experience in multi-sensor annotation for camera, LiDAR, radar, and audio data, supporting enhanced scene perception, localization, mapping, and trajectory optimization. With a full-time workforce of 5,500+ data annotation experts and 10+ delivery centers globally, iMerit combines purpose-built technology, domain-trained talent, and proven processes to address the full spectrum of 3D fusion labeling challenges.

Custom LiDAR-Camera Fusion Workflows for High Accuracy

iMerit’s human-in-the-loop model employs custom workflows tailored to the specific requirements of each AV program. These workflows are designed to handle the unique characteristics of multi-sensor fusion projects, from 2D/3D bounding box linking and 3D point cloud segmentation to panoptic segmentation and merged point cloud annotation.

Merged point cloud processing unifies all coordinates into a single frame, eliminating manual frame traversal and providing annotators with a holistic view of object sequences. By aligning workflow design with each client’s perception architecture, iMerit optimizes for both accuracy and throughput.

Domain-Trained Teams for Rare and Safety-Critical Scenarios

Tackling edge cases and complex annotation scenarios requires more than general labeling skills. iMerit maintains a specialized workforce with curriculum-driven training in the autonomous vehicle domain. These teams have hands-on experience with the kinds of rare, safety-critical situations that standard automated labeling pipelines miss: unusual object configurations, heavy occlusion, adverse lighting, and ambiguous sensor returns. This domain expertise allows iMerit to deliver reliable annotations even in the most challenging labeling conditions.

Multi-Sensor Data Labeling Platform and Tool-Agnostic Integrations

iMerit’s Ango Hub platform is built to support multi-sensor fusion annotation with features including AI-powered auto-detection for pre-labeling, workflow customization, API integration, real-time reporting, and quality auditing. When clients have specific tooling requirements, iMerit trains its teams to work on proprietary platforms. We also maintain partnerships with leading third-party annotation tools, including Datasaur.ai, Dataloop.ai, Segments.ai, and Superb.ai. This tool-agnostic approach ensures seamless integration with any existing data pipeline.

Multi-Stage Quality Control for Fusion Ground Truth

Quality assurance is embedded throughout iMerit’s annotation process. Every task is manually reviewed by highly trained expert annotators. Ango Hub supports multi-stage review workflows with configurable annotator and reviewer assignments, real-time issue tracking, benchmark tasks for performance measurement, and detailed analytics on labeler accuracy and throughput. This layered approach to QC ensures that ground-truth annotations meet the precision and safety standards required for AV perception systems.

Partnering with Leading AV Programs Worldwide

iMerit has extensive experience collaborating with top autonomous vehicle companies, providing data annotation across 2D images and 3D point clouds for advanced 3D perception systems. This track record spans target identification in LiDAR frames for lane markings, road boundaries, traffic lights, poles, pedestrians, signs, cars, and barriers. Working closely with leading AV programs gives iMerit valuable insight into evolving industry requirements and allows us to continuously refine our annotation practices to match the frontier of autonomous driving development.

Integrating Synthetic Data into the 3D Labeling and Evaluation Loop

As synthetic data becomes an increasingly important component of AV training pipelines, iMerit supports clients in validating and refining generated annotations. Synthetic datasets can offset the limitations of real-world data collection by providing the scalability needed for comprehensive model training. iMerit’s role in this pipeline includes quality validation of synthetic labels, ensuring that generated annotations align with real-world labeling standards, and integrating synthetic and real data within unified annotation workflows.

Partner with iMerit to Build Resilient AV Sensor Fusion Pipelines

Multi-sensor fusion in autonomous vehicles demands annotation solutions that can keep pace with rapidly evolving architectures, growing data volumes, and the relentless pursuit of safety. From LiDAR-camera-radar data labeling at scale to edge case curation and synthetic data validation, the challenges are significant but solvable with the right combination of expertise, technology, and process rigor.

iMerit provides end-to-end data labeling and annotation services for 3D sensor fusion in autonomous vehicles. With a workforce of 5,500+ full-time experts, custom workflows built on the Ango Hub platform, and a tool-agnostic integration model, iMerit delivers the accuracy and flexibility that leading AV programs require. iMerit has annotated billions of data points for autonomous use cases and continues to partner with the world’s most advanced mobility programs to build the perception systems that will define the future of safe autonomous driving.

Explore what a purpose-built sensor fusion labeling operation looks like for your program. Contact iMerit’s AV data team today.