3D SEGMENTATION

3D LABELS FOR PERCEPTION TEAMS

TYPES OF SEGMENTATION

iMerit tailors image segmentation services to your project goals, balancing accuracy and throughput. Together, we define requirements and deploy custom workflows that scale to your image analysis demands.

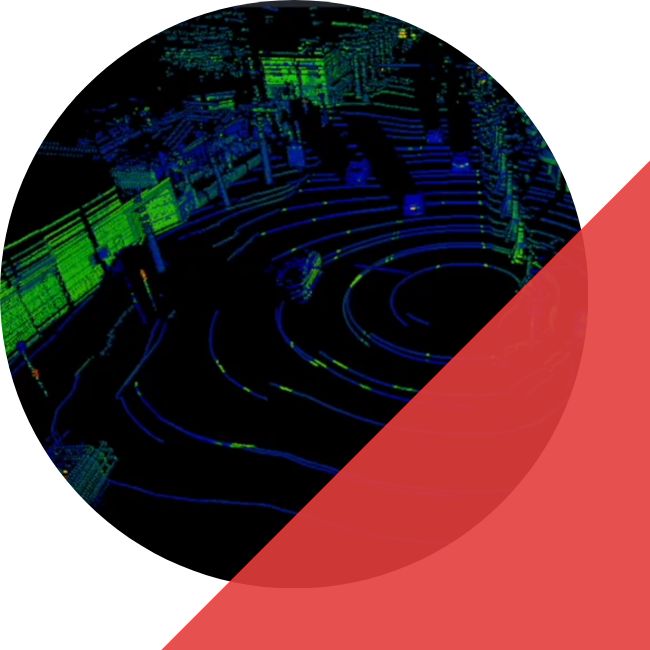

3D SEMANTIC SEGMENTATION

3D INSTANCE SEGMENTATION

3D PANOPTIC SEGMENTATION

Common Use Cases

AUTONOMOUS VEHICLES

ROBOTICS

MAPPING AND LOCALIZATION

INDUSTRIAL MOBILITY

AERIAL MOBILITY AND DRONES

OIL, GAS AND MINING

3D Segmentation for LiDAR Annotation

KEY FEATURES

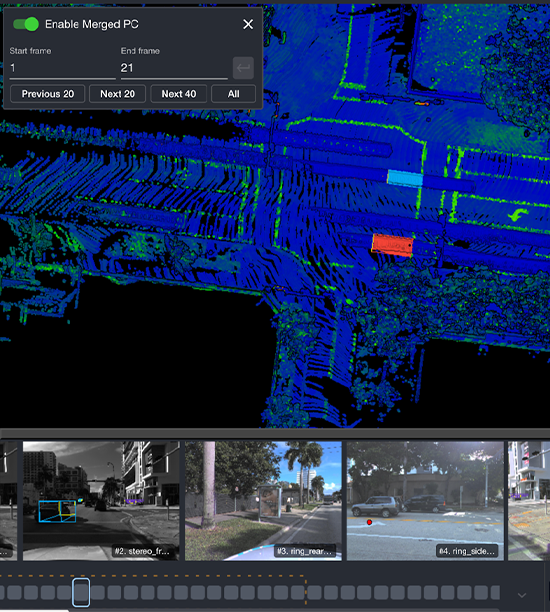

- Multi Tool Annotation: Cuboids for fast 3D bounding boxes, polygons for irregular shapes, and brush tools for fine-grained segmentation when precision matters.

- Flexible Workflows: Switch annotation methods seamlessly based on object complexity, edge cases, and acceptance criteria.

- Fast Labeling Cycles: Combine high-throughput cuboids for bulk labeling with precision tools for complex geometry to reduce rework and QA loops.

- Native 3D Point Cloud Support: Annotate directly in LiDAR point clouds to preserve depth and spatial relationships, with optional projection workflows when needed.

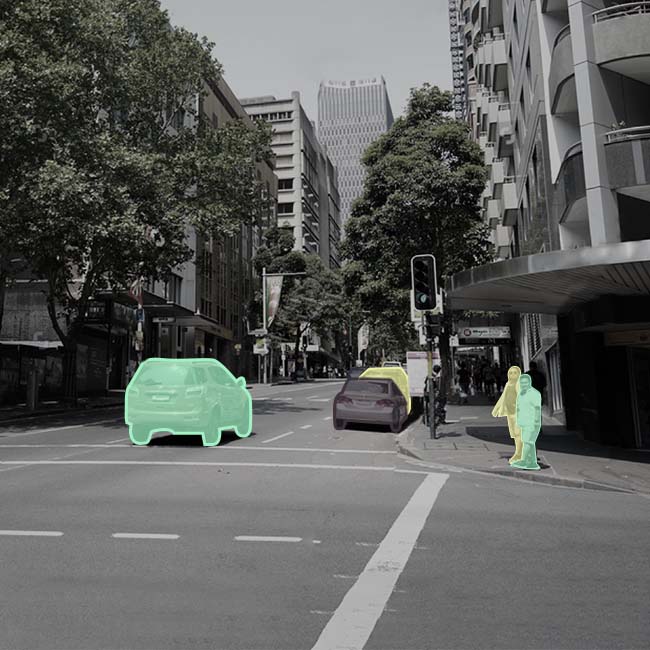

- Broad Class Coverage: Supports vehicles, pedestrians, cyclists, road infrastructure, vegetation, buildings, and custom taxonomies.

QUALITY BUILT FOR SEGMENTATION

BOUNDARY ACCURACY

Clear edge policies and targeted review for high-impact boundaries relevant to planning and mapping.

TAXONOMY CONSISTENCY

Stable class definitions and drift prevention across batches to maintain training reliability at scale.

TEMPORAL CONSISTENCY

Sequence checks to reduce label flicker and improve training signal in time-ordered data.

OPERATIONAL QA

Acceptance criteria, sampling plans, and structured review loops aligned to your internal validation process.

Case Studies

iMerit collaborated with a leading autonomous vehicle company to deliver high-precision LiDAR and 3D point cloud annotations required for a robust 3D perception stack. We deployed a specialized team of experts who completed a rigorous three-level training program and utilized the client’s proprietary tools to meticulously annotate complex road features and targets. This partnership provided the accurate “ground truth” data necessary for the client to ensure reliable object detection and safe autonomous movement of their vehicles

We partnered with this company to support them with data annotation across 2D images and 3D point clouds. 3D perception systems are highly dependent on data quality for improved performance, and the company was looking at target identification in LiDAR frames with lane marking, road boundaries, traffic lights, and others.

With our human-in-the-loop workflows, data labeling on 3D LiDAR frames for poles, pedestrians, signs, cars, and barriers, was achieved seamlessly and accurately.

How We Deliver at Scale

Rapid Scalability

All-sensors Support

High-quality

Tool Ecosystem

Custom Engineering

Security

Frequently Asked Questions

What is multi-sensor fusion in autonomous mobility?

Multi-sensor fusion combines inputs from sensors such as cameras, LiDAR, and radar to improve perception reliability. It helps models handle occlusion, lighting changes, and sensor-specific blind spots by learning from complementary signals.

What types of annotations are used in multi-sensor fusion datasets?

Multi-sensor fusion programs typically include 2D bounding boxes, 3D bounding boxes (cuboids), polygon annotation, semantic segmentation, and object tracking. The right mix depends on whether the perception stack prioritizes detection, tracking, mapping, or dense scene understanding.

How do you keep labels consistent across camera, video, point clouds, and radar?

We apply a unified labeling taxonomy and label specification that is defined for your program, then enforce it consistently across sensor streams. Cross-stream QA checks reduce mismatch between 2D and 3D labels and help maintain class and boundary consistency across sequences.

Do you support sequences and temporal labeling for fusion models?

Yes. We support video and multi-frame sequences, including object tracking and sequence consistency checks to reduce label drift across frames. This is important for motion understanding and temporal fusion use cases.

How does Ango Hub support multi-sensor fusion workflows?

Ango Hub supports multi-sensor fusion workflows by enabling teams to manage annotation across synchronized sensor streams and apply consistent labeling rules within a governed workflow. It helps streamline production with role-based access controls, built-in review and QA steps, and exports aligned to your training and evaluation pipeline.

GETTING

STARTED!

The need for generative AI training data services has never been greater. iMerit combines the best of predictive and automated technology with world-class subject matter expertise to deliver the data you need to get to production, fast.