AI systems do not build themselves. Every chatbot, medical tool, and autonomous system depends on human judgment at each stage of its development. But not all of that human judgment looks the same. A person identifying objects in a photo and a surgeon reviewing a clinical AI’s diagnostic reasoning are both doing AI training work, yet the demands on each are entirely different. Understanding task complexity in AI training is what separates professionals who stay at entry-level roles from those who move into the high-impact, high-value work that shapes frontier AI.

This blog maps the full spectrum of task complexity in AI training, from foundational data labeling to expert-level annotation, and explains what skills each level demands and how you can grow through them.

The Foundational Level: Data Labeling and Simple Reviews

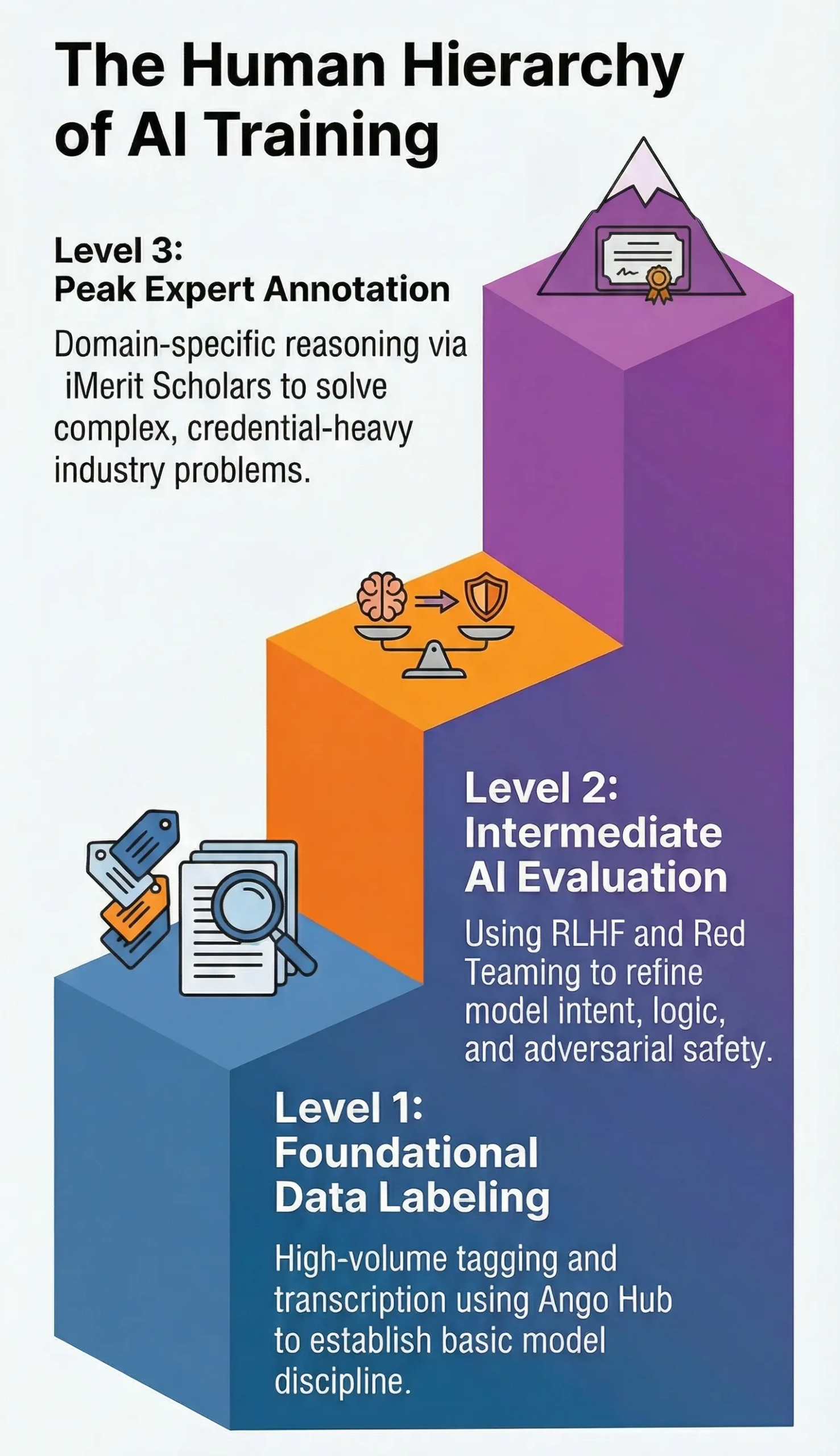

Every AI training career starts somewhere, and for most people, that starting point involves structured, rule-based tasks applied at volume. Data labeling is the clearest example.

At this level, you might identify objects in photographs, transcribe spoken audio, or tag segments of text by category. You might review AI-generated responses and rate them on basic criteria such as accuracy, tone, or relevance to the user’s question. The task instructions are detailed. The criteria are defined. Your job is to apply them consistently and quickly.

Platforms like Ango Hub are built precisely for this kind of structured annotation work. Ango Hub supports image, video, text, and audio annotation workflows, giving annotators a consistent environment to apply guidelines at scale across different data types and projects.

This foundational human-in-the-loop work matters more than it looks. Every label you apply teaches the model something. If your labels are inconsistent or carelessly applied, the model learns inconsistency. The core skill here is not expertise. It is discipline. Sustaining quality across high volumes of repetitive work, without rushing or cutting corners, is the professional standard. Foundational tasks are where most people begin, but they are not where the work ends.

Intermediate Complexity: Reasoning and Adversarial Testing

As AI systems become more sophisticated, the tasks required to train them move beyond simple tagging. The AI evaluation tasks at this level require you to think about intent, logic, and failure modes, not just correct categorization.

Writing and evaluating prompts is one of the clearest examples. Instead of rating a response that already exists, you craft prompts designed to test how the model handles real user intent. Strong prompt writers understand how real people ask questions, which is often very different from how developers phrase them. You then evaluate the model’s responses for accuracy, structure, relevance, and alignment with what the user actually needed.

RLHF, or Reinforcement Learning from Human Feedback, sits at the heart of many intermediate-level tasks. In RLHF workflows, human reviewers compare model outputs and provide preference signals that directly shape how the model is updated. This is not a passive rating. Your comparative judgments feed directly into the model’s training loop, making the quality of your reasoning consequential in a very direct way.

Red teaming represents a different kind of complexity. Here, you deliberately try to break the model. You probe it with adversarial prompts, unusual edge cases, and scenarios designed to expose where it fails, generates biased output, hallucinates facts, or produces harmful content. The skill is adversarial thinking, the ability to reason like someone trying to exploit a system rather than someone trying to use it normally.

Chain-of-thought reasoning tasks push further still. Rather than scoring a final answer, you work through a problem step by step alongside the model, identifying where its reasoning breaks down. This is common in mathematics, logic, and complex decision-making domains. It requires structured thinking and, often, genuine subject matter knowledge.

RAG Fine-Tuning adds another dimension. When AI systems are built to retrieve and synthesize information from external sources, human reviewers assess whether the model is grounding its responses in the retrieved content correctly, whether it is ignoring relevant passages, or whether it is fabricating details that the source material does not support. Evaluating retrieval-augmented outputs requires careful reading and a strong sense of what faithful summarization looks like versus what hallucination looks like.

Beyond the tasks themselves, intermediate-level work also introduces a new layer of professional responsibility: adhering to project-specific processes, guidelines, and quality standards. Following escalation protocols, flagging ambiguous cases correctly, maintaining audit trails, and calibrating consistently with your team are not administrative formalities. They are part of the work. At this level, the quality of your process directly affects the reliability of the training signal you produce.

These AI evaluation tasks bridge foundational annotation and expert-level work. They require more than guideline adherence. They require judgment.

The Peak of Complexity: Expert-Level Annotation

At the highest level of understanding task complexity in AI training, professional credentials and deep subject matter knowledge become the primary qualifications. This is where the work is most consequential and where the gap between a qualified reviewer and an unqualified one is most visible.

Domain-specific evaluation means that a doctor reviews medical AI outputs, a lawyer evaluates legal reasoning models, and a bilingual linguist assesses translations in a language that very few people speak fluently. General reviewers can identify that something sounds wrong. Domain experts can identify exactly why it is wrong, where the reasoning failed, and what the correct answer should be.

Hallucination and bias detection at this level requires genuine depth. A hallucinated medical fact, a legally incorrect interpretation, or a biased conclusion in a financial model can all sound entirely plausible to a non-expert. Catching these errors requires the kind of pattern recognition that only comes from professional experience in the field.

Agent evaluation is among the most demanding AI evaluation tasks emerging in the industry. AI agents take autonomous actions, whether executing code, managing files, interacting with external systems, or completing multi-step workflows. Evaluating them means assessing not just whether a single output is correct, but whether a sequence of decisions is safe, intentional, and aligned with what the user actually wanted. This requires systems thinking applied to complex, often unpredictable behavior.

Domain expertise is the differentiator at this level. It is not something you can fake, and it is what makes expert-level annotation both difficult to staff and disproportionately valuable to AI development teams.

Watch how expert-led evaluation works inside the Ango Deep Reasoning Lab.

VIDEO – ANGO HUB DEEP REASONING LAB

Skills That Evolve Across the Complexity Spectrum

Several skills appear at every level of AI training work, but what they demand of you changes significantly as complexity increases.

Attention to detail at the foundational level means applying a label correctly and consistently. At the expert level, it means noticing that a clinical AI response uses a term in a subtly incorrect clinical context, a distinction that would be invisible to a non-specialist.

Analytical thinking at the intermediate level means distinguishing between two responses that are both factually correct but differ in how they communicate. At the expert level, it means identifying a flaw in a chain of reasoning that would lead a model, and potentially a user, to a wrong conclusion.

Comfort with structured guidelines evolves from following a checklist to interpreting the intent behind that checklist. Guidelines cannot anticipate every situation. At higher complexity levels, you apply the principle, not just the rule.

While data labeling builds the professional foundation, domain expertise opens the door to work that pays more, carries more responsibility, and directly influences the behavior of AI systems used by millions of people.

Industry Applications of Complex AI Training

The demand for complex AI training work is concentrated in industries where errors carry real-world consequences.

Healthcare AI is one of the most active and consequential domains. Incorrect outputs from a medical AI can affect clinical decisions. This is precisely why organizations like iMerit work with credentialed medical professionals through the iMerit Scholars Program, ensuring that the human expertise shaping these systems is deep, accountable, and real.

Autonomous mobility generates some of the most technically demanding annotation work available. Companies building perception AI for self-driving systems need specialists who understand real-world edge cases, particularly in 3D sensor fusion and mapping environments where standard datasets do not capture the full range of conditions a vehicle will encounter.

Frontier model development demands continuous human-in-the-loop work across all modalities and languages. The large foundation models that power products used by millions require ongoing evaluation from professionals who can assess quality, safety, and alignment at the level these systems operate.

These industries do not just need large numbers of annotators. They need the right people with the right expertise, and they are actively building programs to find them.

Career Pathways: Navigating Complexity

Understanding where you sit on the task complexity spectrum is the first step toward a deliberate career path in AI training.

Most people begin as AI trainers or annotators, building speed, accuracy, and familiarity with evaluation frameworks through platforms like Ango Hub. The focus is on consistency and quality at volume. This stage develops the professional discipline that all subsequent work depends on.

With experience, many practitioners advance to reviewer or quality analyst roles, assessing others’ work, identifying patterns in errors, and helping maintain the quality standards that model development requires. This stage demands a stronger grasp of what makes training data high-quality and why particular guidelines exist.

The most significant jump comes when domain expertise becomes the primary credential. At this stage, you are not reviewing an annotation against a rubric. You are applying professional judgment that the rubric cannot fully capture. This is the level the iMerit Scholars Program is designed for.

The Scholars Program brings credentialed domain experts across medicine, law, mathematics, linguistics, and the sciences directly into the AI development process. Work is conducted through the Ango Deep Reasoning Lab, a platform built specifically for expert-level AI collaboration that goes beyond general annotation into structured reasoning and iterative feedback. In one engagement with a global technology company, iMerit deployed more than 80 Scholars across multiple disciplines, completing over 5,000 tasks across 30 types of reasoning. This is not crowd annotation. It is a structured, high-stakes collaboration between professional experts and AI development teams.

Beyond expert annotation, the skills developed across this career spectrum transfer into AI product management, data operations, AI ethics, and model development roles. The professionals who master various AI evaluation tasks are building expertise that the broader AI industry actively needs. If you are exploring how to build an AI training career, this guide breaks down the skills, domains, and growth pathways in detail.

How to Get Started

Getting started in AI training does not require a technical background. It requires starting with what you already know and building deliberately from there.

Begin by identifying the domain where your professional background or academic training is strongest. AI is being actively developed in almost every field, and that expertise is more relevant than most people realize.

Next, get familiar with how evaluation tasks are actually structured before applying anywhere. Many AI companies publish sample guidelines and evaluation rubrics publicly. Reading through a few of these gives you a realistic sense of what the work involves and helps you develop the kind of judgment that hiring teams look for. Do not skip this step. Understanding the intent behind a rubric, not just its surface rules, is exactly what distinguishes a strong applicant from one who treats the work as mechanical.

Build familiarity with annotation platforms. Ango Hub is one of the industry’s leading tools, supporting text, image, video, and audio annotation workflows. Getting comfortable with how professional annotation environments work will reduce the learning curve when you start.

Then apply directly. The most direct path is through companies like iMerit that connect domain experts with AI development projects at scale. If your expertise is at the level where advanced AI systems genuinely need someone with your background, the iMerit Scholars Program is a strong and structured place to start.

Conclusion

Understanding task complexity in AI training is not just a conceptual exercise. It is a practical map for building a career with direction and purpose.

Foundational data labeling builds the professional habits that all higher-level work depends on. Intermediate tasks, including RLHF, RAG evaluation, chain-of-thought reasoning, and red teaming, develop the analytical and adversarial thinking that help shape more capable models. Expert-level annotation applies professional credentials and deep domain knowledge to the problems that matter most and that are hardest to solve without the right human expertise.

The future of AI is shaped by people who bring their human judgment, their professional backgrounds, and their disciplined thinking to this work. Start with what you already know. Review how evaluation rubrics are structured. Build toward greater complexity. And if your expertise is at the level where AI systems genuinely need someone like you, explore the structured, rigorous path that the iMerit Scholars Program offers. Join here.