Scaling LiDAR annotation for production AI requires domain-trained annotators, sensor-aware tooling, and multi-stage quality assurance built into every step of the data pipeline. Perception teams working with 3D point cloud data know that raw LiDAR captures are dense, noisy, and inherently three-dimensional, making them far more complex to label than 2D imagery. A perception model’s path to production runs directly through the annotation operation that converts raw LiDAR scans into reliable ground truth. For teams building autonomous vehicles, robotics platforms, or geospatial applications, the annotation operation must be engineered with the same rigor as the model architecture.

Turning Raw LiDAR into Reliable 3D Ground Truth

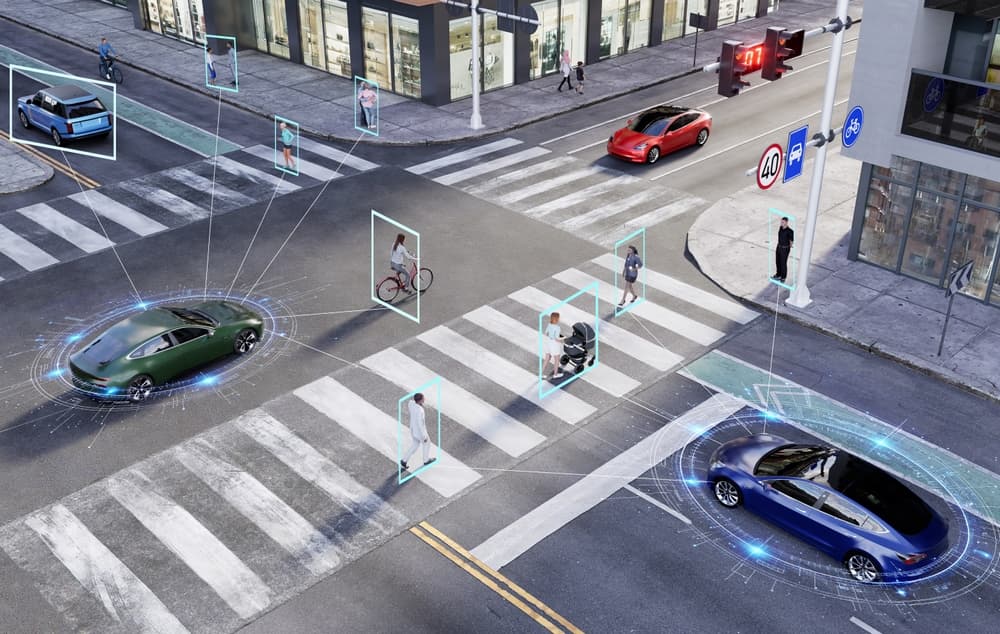

LiDAR sensors generate point clouds by emitting laser pulses and measuring how long each pulse takes to return after hitting a surface. The result is a spatially precise but visually sparse representation of the environment. Converting these captures into ML-ready ground truth requires annotators to interpret 3D spatial relationships, identify partially occluded objects, and apply consistent class labels across thousands of frames.

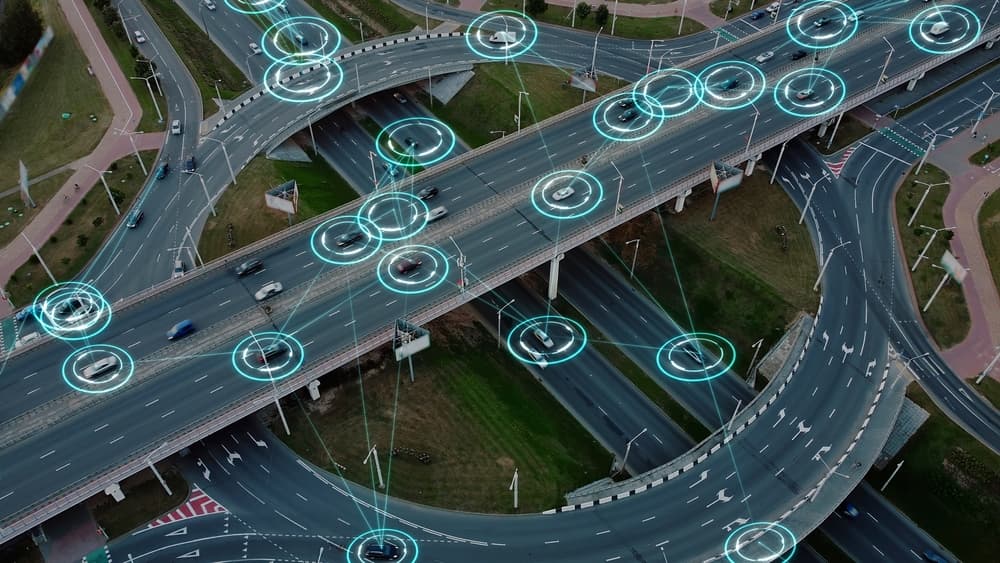

The complexity varies by use case. Autonomous driving pipelines typically require 3D cuboid annotation around vehicles, pedestrians, and cyclists, along with semantic segmentation of road surfaces and static infrastructure. SemanticTHAB, for example, contains 4,750 LiDAR point clouds from urban environments labeled across 20 semantic classes, providing the kind of dense, per-point ground truth that 3D segmentation models need to train effectively.

Airborne LiDAR datasets present additional challenges because point density varies significantly with altitude and terrain, forcing annotators to work with uneven coverage across a single scan. SensatUrban, for example, includes nearly three billion labeled points from several UK cities and highlights how fine‑grained urban categories like benches, bollards, and rail lines remain sparse and hard to annotate consistently even at city scale.

Infrastructure-focused LiDAR, such as scans of busy intersections, bridges, and tunnels, adds another layer of difficulty. Annotators need to separate static structures from moving vehicles and pedestrians, often at centimeter-level precision, while adjusting each 3D bounding box for position, size, and orientation. Researchers behind the University of Florida’s FLORIDA dataset described this work as ‘time-consuming and expensive’ even with semi-automated tools. Scale that across a full production pipeline, and it becomes clear why expert annotators, structured QA, and smart workflow design matter so much.

Architecting LiDAR Annotation Pipelines That Match Real Use Cases

Effective LiDAR annotation pipelines need to be designed around the specific sensor configuration and deployment context, not adapted from generic 2D labeling workflows. Beam count, field of view, angular resolution, and range all influence what the annotator sees and how they should label it.

In autonomous mobility applications, annotation pipelines need to track objects consistently from one frame to the next. An object labeled as a vehicle in frame 12 must maintain its identity through frame 200, even as it moves or becomes occluded. Merged point cloud workflows, which unify all coordinates into a single frame, give annotators a holistic view of object sequences and reduce inconsistent labels across time.

Pipeline architecture also needs to account for annotation taxonomy. A project requiring only 3D bounding boxes operates very differently from one demanding per-point semantic segmentation across 20 or more classes. Matching workflow design to the production requirements of the perception model prevents wasted effort while ensuring the dataset captures the distinctions the model needs to learn.

Operational Strategies for Scaling LiDAR: Fusion, Workflows, and QA

Scaling 3D point cloud annotation without sacrificing quality depends on sensor fusion, workflow automation, and structured quality assurance.

Multi-sensor fusion annotation, where LiDAR data is labeled alongside synchronized camera imagery and radar returns, gives annotators additional context that improves labeling accuracy. Research on real-time object detection has shown that fusing LiDAR depth information with camera-based RGB features at the feature layer yields significant gains in detection performance over single-modality approaches. Multi-sensor fusion annotations, including 2D/3D linking, bounding boxes, and point cloud segmentation, give perception models a richer training signal.

Workflow automation reduces the repetitive burden on annotators without removing human judgment from the loop. Pre-labeling with trained ML models handles routine object detection, allowing annotators to focus on verifying, correcting, and labeling edge cases. Platform capabilities like API integration, automated task routing, and custom workflow stages keep throughput high while maintaining a clear separation between production annotation and quality review.

Structured QA remains the critical differentiator at scale. Two-step workflows, where separate annotators and reviewers handle each frame, catch errors before they enter the training pipeline. Real-time analytics tracking annotator performance and edge case frequency allow project managers to intervene early when quality drifts. These are the kinds of operational controls that separate research-grade annotation from production-ready data pipelines.

Partner with iMerit for Production-Ready LiDAR and 3D Point Cloud Annotation

Building a 3D perception system that performs reliably in the real world starts with the data behind it. iMerit provides software-delivered services for data annotation and model fine-tuning by unifying automation, human domain experts, and analytics into a single end-to-end solution.

Our 3D Point Cloud Annotation Services and 3D Point Cloud tool are built for production-scale operations, with trained annotators experienced in semantic segmentation, 3D cuboid annotation, landmark labeling, polygon annotation, and polyline annotation.

We support all sensor types, including multi-sensor fusion across LiDAR, radar, and camera data, and we adapt to proprietary tools or deploy our Ango Hub platform with custom workflows, auto-annotation, and real-time reporting. With 6,000+ full-time annotation experts and ISO 27001, SOC 2, and HIPAA compliance, we scale securely without compromising accuracy.

Contact our experts today to accelerate your LiDAR data operations and help your perception models reach production faster.