Cobots and autonomous mobile robots (AMRs) depend on high-quality multimodal perception data to operate safely alongside humans and navigate dynamic environments. The performance ceiling for these systems is set not by the model architecture, but by the training data feeding it. Whether the application is warehouse logistics, medical delivery, or factory-floor assembly, perception teams face the same core problem: building datasets diverse enough in environment, sensor input, and edge-case coverage to hold up once the robot leaves the lab. A cobot that freezes at every unexpected shadow or an AMR that misjudges a cluttered aisle can usually trace the failure back to gaps in its training data. Closing those gaps requires high-quality annotations, tight multi-sensor alignment, and deep domain knowledge of the deployment environment.

Why Cobots and AMRs Need Better Perception Data

Human Proximity

Cobots and AMRs operate in close physical proximity to humans, which places extraordinary demands on their perception systems. Collaborative robots must continuously interpret the positions, movements, and intentions of nearby workers. A cobot on a shared assembly line needs to detect a human hand reaching into its workspace within milliseconds, and a delivery AMR navigating a hospital corridor must distinguish between a rolling cart and a patient in a wheelchair.

Predictive Perception and Safety Benchmarks

Collision avoidance requires more than detecting where a person is right now. The perception system also needs to predict where that person is moving next. Perceived safety in human-cobot interaction depends on factors like robot speed, distance, and motion predictability, which means training data must capture a full range of human postures, gestures, and movement patterns. Vision alone isn’t enough. Combining visual action recognition with tactile contact detection produces more reliable safety outcomes than either modality on its own, with tactile perception achieving 96% accuracy in distinguishing intentional contact from incidental collisions.

Core Perception Challenges for Cobots and AMRs

Dynamic Environments and Localization

Perception dataset construction for cobots and AMRs is particularly demanding because these robots operate in environments where the scene changes continuously. A warehouse AMR cannot rely on a fixed map when pallets are rearranged hourly.

Close-Range Occlusion and Ambiguity

Close-range human interaction introduces significant occlusion and ambiguity. A cobot’s camera may see only a partial view of a human arm behind equipment, or the depth sensor may return noisy readings at very close range.

Temporal Consistency Across Frames

Cobots and AMRs both need to track objects and people across frames, maintaining identity even through occlusion events. A dataset with only single-frame annotations limits the model’s ability to reason about movement and predict trajectories.

Multimodal Perception Datasets and Annotation Workflows

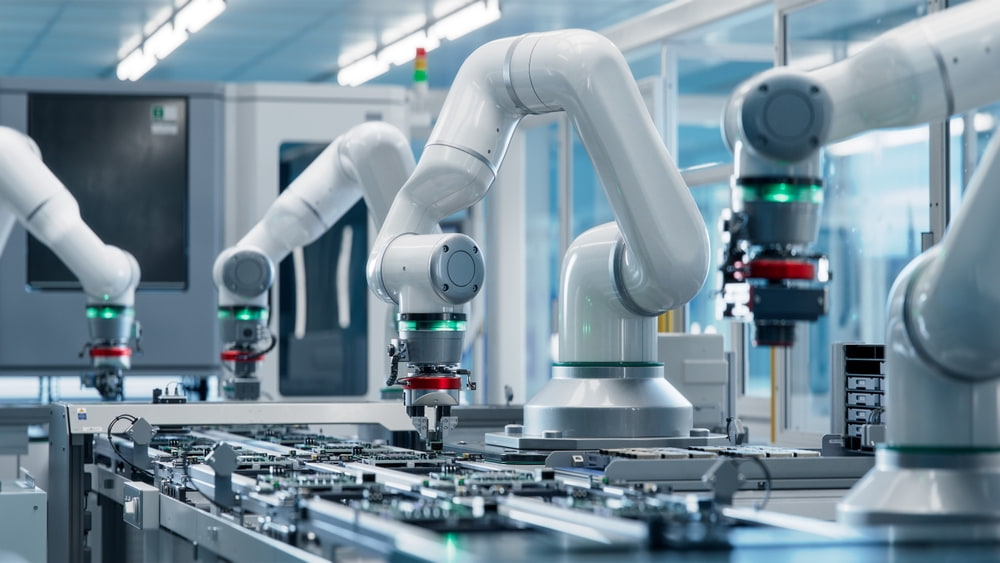

Modern cobots and AMRs rely on multiple sensor modalities working together. Cameras provide rich visual detail, LiDAR delivers precise depth measurements, radar offers robustness in poor visibility, and force/torque sensors give haptic feedback during manipulation. Building multimodal datasets that align these inputs requires careful synchronization and cross-modal annotation consistency.

Cross-Modal Alignment and Calibration

The annotation workflow for multimodal datasets is substantially more complex than for single-sensor pipelines. When a LiDAR point cloud and a camera image capture the same scene, the bounding box in the image must correspond precisely to the 3D cuboid in the point cloud. The CU-Multi dataset, released in 2025 by researchers at the University of Colorado Boulder and MIT, tackles this head-on for multi-robot teams: it enables realistic evaluation of collaborative perception and simultaneous localization and mapping (SLAM) by providing synchronized multi-modal sensing, semantic LiDAR annotations, and refined odometry with controlled trajectory overlap across two urban environments. For perception teams working on AMR fleets, CU-Multi offers a way to benchmark how well fusion models hold up when multiple robots need to build a shared map of the same space.

Safety-Critical HRI Data

For cobots operating alongside humans, perception datasets also need to capture the physical dynamics of human-robot interaction (HRI), including contact events. The LiHRA dataset, presented at IROS 2025, is the first dataset designed to enable learning-based and advanced risk assessment methods for HRI. It lets perception teams train and benchmark models that can differentiate between expected contact forces (like those during a handover) and potentially hazardous, unintended collisions. LiHRA labels not only the potential of a collision in each frame, but also the estimated force applied to the human, enabling context-aware risk and safety monitoring that aligns with ISO/TS 15066:2016 standards. For teams building cobots for industrial settings, LiHRA offers a direct path to evaluating whether a perception system can reliably flag dangerous interactions before they cause harm.

Domain-Specific Taxonomies

Safety standards such as ISO 10218 don’t just define maximum contact force limits; they

assume the robot can distinguish between different body regions, contact types, and task contexts in the first place. That translates directly into how perception teams design their label space: “person” is not a sufficient label when the system must tell a deliberate handover from an unplanned torso collision, or evaluate whether contact is happening near a sensitive body area.

In practice, a warehouse taxonomy needs categories like “loaded pallet,” “empty pallet,” “forklift approach zone,” and “operator torso,” while a medical taxonomy must differentiate “wheelchair occupant” from “visitor,” “IV pole,” or “gurney,” even though many of these appear as generic obstacles in driving datasets. The label space has to reflect the safety and operational decisions the robot will actually make.

Edge Case Curation

Edge case curation is equally critical. The scenarios most likely to cause failures are rare by definition and underrepresented in passively collected data. A cobot may encounter a reflective surface that confuses its depth sensor once in a thousand operating hours, or an AMR may face a transparent glass door that barely registers on LiDAR. These situations almost never appear in routine data collection runs, which means teams need to actively stage, mine, or synthesize them. Combining automated anomaly detection with expert review is the most effective way to build targeted datasets that stress-test perception models where they are weakest.

Build Safer Cobots and AMRs with iMerit’s Robotics Annotation Solutions

Precision perception data is the foundation of every reliable cobot and AMR, and building that data at scale requires the right combination of technology, expertise, and quality assurance. iMerit delivers software-powered data annotation solutions for robotics perception teams by unifying automation, human domain experts, and analytics into a single end-to-end workflow.

Our annotation capabilities span multi-sensor fusion, 3D point cloud labeling, panoptic segmentation, object tracking, and custom taxonomy development, all backed by rigorous QA pipelines. With a team of 6,000+ expert annotators, ISO 27001 certification, and experience across warehouse, medical, agricultural, and industrial robotics applications, we help perception teams build the multimodal datasets that drive safer, more accurate robotic systems.

Contact our experts today to discuss how we can accelerate your robotics data operations.