Scaling Human Feedback for

Advanced AI Image Generation

A leading AI company partnered with iMerit to scale human feedback workflows that improved the performance and reliability of its image generation model.

Challenge

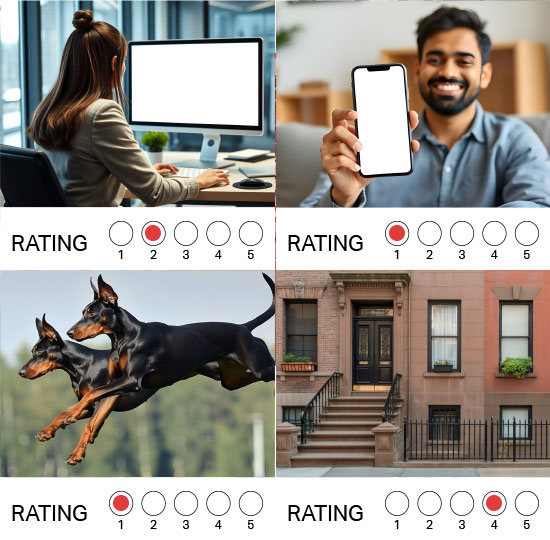

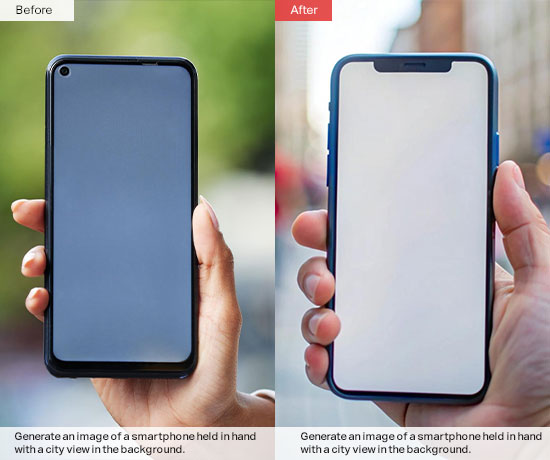

The client, a fast-growing AI company developing an advanced image generation and editing model, needed large volumes of structured human feedback to refine model outputs. As the model scaled, so did the need for high-quality comparison data, edit identification workflows, and multi-rater preference judgments.

The work required nuanced human evaluation and more than simple object labeling. Annotators needed to compare image outputs, identify meaningful visual differences, and provide structured feedback to improve model training. The client also required rapid turnaround cycles, high throughput, and consistent high quality standards – all without over-constraining subjective human judgment.

Existing internal workflows could not support the required scale or speed. The client needed a partner capable of rapidly deploying structured human-in-the-loop operations while maintaining data clarity and IP integrity.

“iMerit helped us scale human-in-the-loop evaluation for our

image generation models.”- Principal ML Engineer

Solution

- Deployed scalable HITL workflows across multiple concurrent projects

- Scaled specialist image and design teams for rapid, high-volume data generation

- Implemented calibration and QC loops to maintain consistency at speed

- Supported multi-rater preference modeling

- Executed sprint-based delivery cycles with structured quality governance

- Rapidly configured and iterated workflows in Ango to support hundreds of thousands of tasks across diverse task types

- Integrated seamlessly with the client’s internal tooling when available

To support the client’s rapidly evolving image generation model, we built a scalable human-in-the-loop program designed for speed, flexibility, and quality at volume. Rather than treating each workflow as a standalone effort, we implemented a structured operating model that could support multiple concurrent projects while adapting to shifting requirements.

We rapidly mobilized specialist image reviewers and design talent to power evaluation, annotation, and high-volume data generation. Work was executed in sprint-based cycles, allowing us to iterate quickly while maintaining throughput targets. As requirements evolved, we configured and refined workflows in Ango to support hundreds of thousands of tasks across diverse task types, enabling seamless task distribution, tracking, and quality oversight.

To ensure consistency at scale, we embedded calibration and QC loops across the program, supporting multi-rater preference modeling while allowing for appropriate subjectivity in evaluation tasks. Governance mechanisms were refined over time to balance speed with defensible quality standards.

The result was an agile, repeatable delivery framework capable of scaling rapidly, integrating with the client’s internal tooling where needed, and sustaining high-volume execution across complex and evolving model training initiatives.

Result

- 2M+ high-volume image tasks delivered across evaluation, preference modeling, and data generation workflows

- 4,000+ global specialists mobilized, often deployed within days to meet aggressive ramp timelines

- Consistent execution across 3–5 day sprint cycles, enabling rapid iteration aligned to model training needs

- Sustained quality at scale through embedded calibration and governance frameworks

- Flexible workflow orchestration in Ango, supporting hundreds of thousands of tasks across evolving task types

- Established a repeatable, scalable operating model capable of supporting ongoing, high-velocity AI development