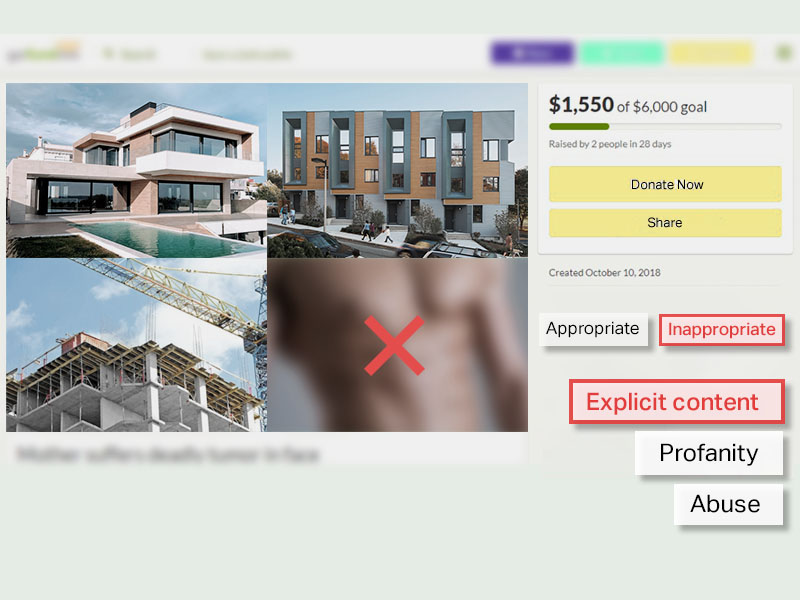

![Content moderation of user-submitted images for sensitive content, quality, and guideline violations.]()

Image moderation

Expert moderators evaluate user-submitted images on online communities and forums for sensitive content, quality, and guideline violations. Platforms are then able to accurately identify violence, offensive comments, drug and weapon use, and to add metadata to large datasets.

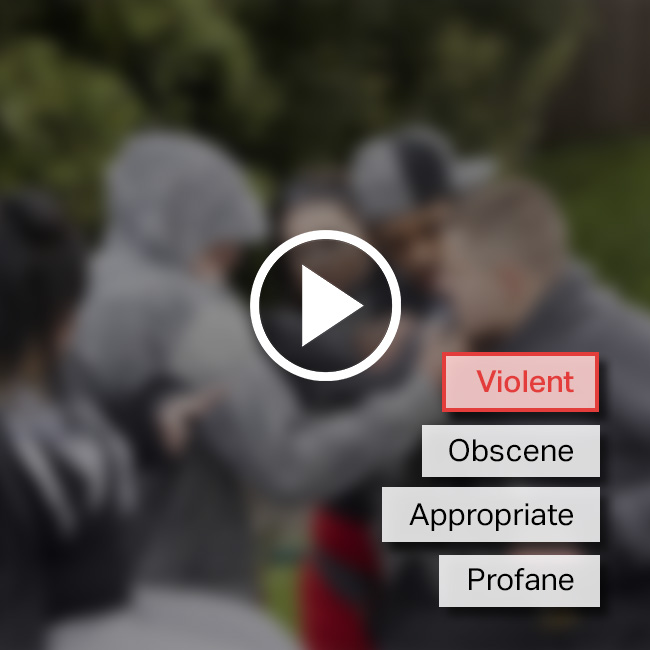

![Video content moderation to rate, evaluate and flag offensive video content.]()

Video moderation

Video content moderation helps rate, evaluate and flag offensive video content and trolls that can harm brand image and removes that content from the videos. iMerit expert moderators can moderate frame-by-frame and still images using real-time reporting.

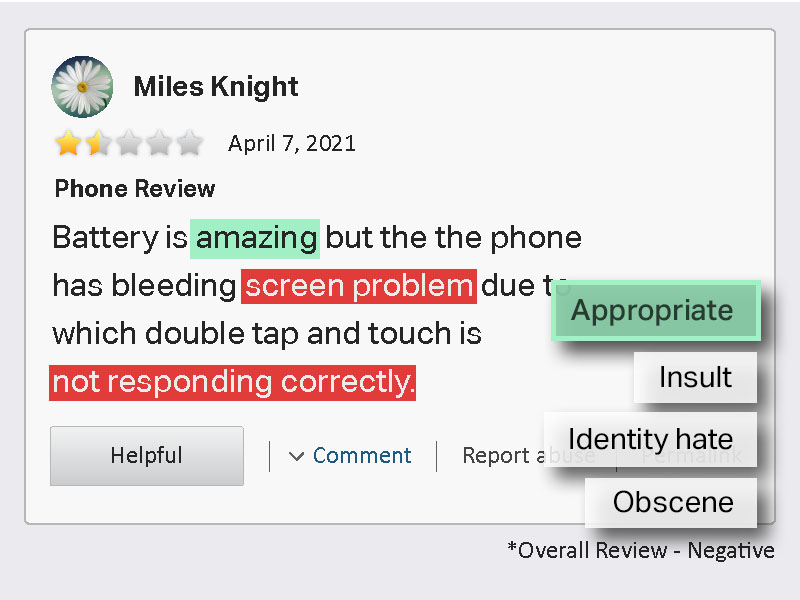

![Text moderation search for duplicate content, offensive content, or content that does not comply and remove it.]()

Text moderation

Text moderation is performed on documents, discussion boards, chatbot conversations, e-commerce catalogs, and chat room transcripts. Text moderators can search for duplicate content, offensive content, or other pieces of content that do not comply with community standards and remove them.